Living review of WELLBY cost-effectiveness analyses

- Home

- Our Publications

- Living review of WELLBY cost-effectiveness analyses

Summary

- We curate the living review of how much wellbeing per dollar is created by charities (i.e., cost-effectiveness analyses, CEAs), as measured in wellbeing-adjusted life years (WELLBYs), a new, standardised metric of value. We will add new evaluations to this living review as they are published.

- Our review found 24 charity cost-effectiveness estimates by 4 different evaluators, all produced in the last decade.

- The dataset includes different types of charities working across the world. While not fully representative, this sample does capture a wide variety of interventions and charities.

- Beyond using wellbeing, the methods across evaluators are not exactly the same; hence, different evaluators might evaluate different charities differently.

- We find that the wellbeing cost-effectiveness of charities varies dramatically. The best charities in our sample are hundreds of times better at increasing happiness than others. This implies donors can multiply their impact at no extra cost to themselves by funding the best, most cost-effective charities.

- Our sample has two key gaps. It doesn’t include any evaluations of large, well-known charities or a typical act of charitable giving, such as helping the homeless.

Why a living review of WELLBY CEAs?

Can we do good by giving our money to charities? A review of the cost-effectiveness of charities, using the right metric and definition of “good”, is necessary for people to make the right choices with their donations.

We take a wellbeing approach, where what ultimately matters is how people think and feel about their lives (Crisp, 2001; OECD, 2013; Moorhouse et al., 2020; Plant, 2023). This is typically measured with questions like “how happy are you?” on a scale of 0-10 (OECD, 2013). One of the main benefits of using self-reported wellbeing is that we can see, from the data, how much things like wealth and health really matters to people’s lives, rather than assuming we know.

Therefore, the impact of a charity, policy, or intervention is how much it increases wellbeing. We further quantify impact with wellbeing adjusted life-years, aka WELLBYs (see this explainer; Brazier & Tsuchiya, 2015; Layard & Oparina, 2021). One WELLBY is equivalent to a 1-point increase11 on a 0-10 self-reported wellbeing scale (typically life satisfaction) for 1 person for 1 year. If your wellbeing went from 7/10 to 6.5/10 for 2 years, that would be a loss of 1 WELLBY.

However, resources are limited, so we don’t only care about impact, we care about cost-effectiveness. Not every charity can be funded, so which one does the most good with the money we give? How can we make the biggest difference to world happiness with what we have to spare?

We are not the only people to propose or use WELLBY cost-effectiveness. The WELLBY has been suggested as an alternative or complement to measures of health and wealth in previous editions of the World Happiness Report (Layard & Oparina, 2021), and in academia for evaluating public policy (Frijters et al., 2020, 2024). In 2021, the WELLBY methodology was adopted by the UK’s Treasury as an official way of evaluating the impact of government policies.

We see no good reason not to apply WELLBYs to charities: it gives us a scientifically credible, evidence-based way to work out how much good we do, per dollar, by giving to different charities.

And we are not the only ones to do these evaluations, so we decided to create a living review of charity cost-effectiveness analyses (CEAs) in WELLBYs. This project comes out of our chapter in the 2025 World Happiness Report (Plant et al., 2025). Here we expand on it, and update the review with new or updated evaluations.

Method and inclusion criteria

We aim to collate and present CEAs in WELLBYs of charities.

The data is publicly available here, and the code is publicly available here.

We will update this regularly. We will keep a log of different versions.

We plan to have a formal system for people to send their CEAs for consideration.

Inclusion criteria

Here are our current inclusion criteria for a CEA to be included. We reserve the right to edit these over time.

- The CEA must be published in a report, white paper, or academic publication with written explanation. Not a simple spreadsheet, or number without explanation. Not an unfinished or back-of-the-envelope calculation (BOTEC). We need to be able to refer to it / point others to it.

- The CEA must be based on empirical evidence.

- The CEA must be of an actual charity that could potentially receive funds, not of a general intervention or policy. For example, StrongMinds, a charity delivering psychotherapy, rather than an evaluation of psychotherapy itself or a policy to promote access to psychotherapy.

- The CEA must be conducted by an independent organisation, not the charity itself. Unless there is a collaboration with an external reviewer that would significantly reduce the risk of bias.

- The outcome should be improving wellbeing12, using subjective wellbeing measures, such as life satisfaction, happiness, or affective mental health13.

- The CEA must contain a cost-effectiveness figure, or at least a cost figure and a wellbeing impact figure that could be used to build the cost-effectiveness figure.

Variables

Simply put, a CEA of a charity would look at the costs of a charity for providing a service, how many people are reached, and the average benefit per person affected. So, if a charity spent $1 million, reached 50,000 people, and they each got a 1 WELLBY benefit, that is 50,000 WELLBYs for $1 million, a cost-effectiveness of $1 million / 50,000 = $20 per WELLBY (CpWB). This can also be presented as 50 WELLBYs created per $1,000 donated to the charity (WBp1k).

We do, however, include our subjective assessments of:

- the relevance of the evidence; namely, how directly about the charity itself is the evidence? Often there is a tradeoff, where evidence directly about a charity tends to be of lower causal quality (e.g., pre-post data) when more general evidence about the intervention they deploy (e.g., RCTs of psychotherapy) can have higher causal quality.

- the depth of analysis; namely, how much depth has gone into this evaluation. Some of these include simply the impact of an RCT combined with cost, whereas others involve large meta-analyses with analyses for different adjustments, etc.

These can be understood as indicating uncertainty14, and therefore informing the reader of how confident they should be in the numbers presented.

Different evaluators

We collate and present information from different evaluators. We present the results as they are, at face value, with minimal adjustments (sometimes we need to combine the cost and impact reported; and we apply a ‘depth of analysis’ evaluation, see above). We do not take responsibility for how different evaluators conduct their evaluations.

Currently, there are differences in the details of how evaluators conduct WELLBY CEAs. We plan to discuss this in more detail in future versions. The appendix of our World Happiness Report chapter discusses some of these differences.

Briefly, all the evaluations produce a similar output: WELLBY cost-effectiveness. However, they differ in terms of their inputs: the evaluations are not all done in the same way. The main differences are:

- The depth of the analyses, and the quality and quantity of the evidence used15.

- Modelling choices, such adjusting for internal and external validity, and whether researchers tried to include longer-term and spillover effects to households or communities (as opposed to just the short-term impacts on direct beneficiaries).

Details about each charity CEA

The charity CEAs we collate on usually consist of technical reports. For brevity and readability, we do not describe every charity CEA here. In the future, we will have an appendix with a summary of each evaluation. Meanwhile, the appendix of our World Happiness Report chapter has such a summary for most of the CEAs presented here.

Results

Wellbeing CEAs of charities only started appearing in the last few years. To wit, the earliest ones were in Plant (2019), although not explicitly in WELLBYs, with the first ones in WELLBYs all published in 2021: by Frijters and Krekel (2021), State of Life (2021a), and the Happier Lives Institute (McGuire & Plant, 2021d).

- Happier Lives Institute (8 charities): This is us, a UK wellbeing research institute doing charity evaluations focusing on finding the most cost-effective ways of improving global wellbeing.

- State of Life (3 charities): a UK organisation doing social value impact evaluation.

- Pro Bono Economics (10 charities): a UK organisation focused on wellbeing impact in the UK.

- Krekel and colleagues (3 charities): an umbrella term we use to describe the academic publication of Professor Christian Krekel from LSE and his colleagues (Frijters and Krekel, 2021; Krekel et al., 2021; Krekel et 2024).

Distributions of cost-effectiveness

We present the distribution of the CEAs below. Figure 1 is in cost per WELLBY. Figure 2 is in WELLBYs created per $1,000 donated, which presents the same information but compresses the bottom options rather than the top options.

Figure 1: The cost-effectiveness of charities by depth and evaluator (in cost per WELLBY).

Figure 2: The cost-effectiveness of charities by depth and evaluator (in WELLBYs created per $1,000 donated).

Explaining the distribution

This demonstrates that charities differ radically in how much wellbeing they create per dollar. Why is this?

One may argue that the differences are due to the differences between the evaluators (see Figure 3). Notably, the evaluations from the Happier Lives Institute tend to have higher cost-effectiveness than those of the other evaluators (except for that of Tearfund by State of Life). Is it that the Happier Lives Institute is particularly favourably biased towards the charities it evaluates?

Figure 3: The average cost-effectiveness of charities by evaluator.

We think not because this is plausibly explained by the evaluators having different sampling processes. Three of the evaluators – State of Life, Pro Bono Economics, and Krekel and colleagues – are, to a large extent, analysing UK charities that they were asked to analyse or which were convenient to analyse. In contrast, the Happier Lives Institute explicitly set out to look for the most cost-effective charities and focuses on charities working in low-income countries.

Hence, the better explanation is that the top charities are providing cheap, impactful interventions in low- or middle-income countries (LMICs). In contrast, the less cost-effective charities are working in high-income countries (HICs) to help people in areas of the world that are richer and thereby where help is much more expensive. If readers wanted to focus their donations towards charities in the UK, then the subsample of charities in HICs evaluated here is still informative.

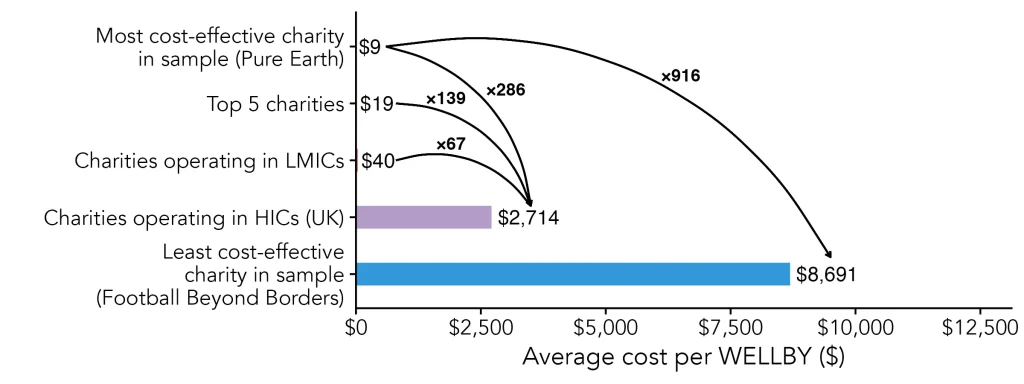

Looking across the board (see Figure 4), we see wellbeing cost-effectiveness varies substantially from $9.49 to $8,691 per WELLBY. This is a 916 fold difference in cost-effectiveness. Contrary to the people’s belief that the best charities are only 3 times better than the average, here we show that some charities can be hundreds of times better. This means that donors can multiply their impact at no extra cost to themselves by funding the best, most cost-effective charities.

Figure 4: Comparing cost-effectiveness across the categories.

Note. The values by the curves represent ratios of how many times more cost-effective.

Limitations

For CEA to be credible, good data and analysis is needed. But we will be waiting forever if we want perfect data and analysis. Thereby, in this living review, we present a range of evidence-based cost-effectiveness estimates of charities informed by data, not ‘facts’, and we hope further work will refine them. Given the alternative to estimates is to rely on our intuitive judgments, we see real value in making and using estimates for decision-making purposes.

Our sample has two key gaps. First, it doesn’t include any evaluations of large, well-known charities such as Oxfam. We call charities like this Multi-Armed NGOs (MANGOs). It is difficult to evaluate them because they have so many different programmes (‘arms’), which likely have varying levels of cost-effectiveness. We discuss this more in our chapter in the 2025 World Happiness Report (Plant et al., 2025) and in our blog.

Second, we do not have WELLBY CEAs or a typical act of charitable giving, such as helping the homeless or donating to a foodbank. We explored some of this with BOTECs in our 2025 World Happiness Report chapter (Plant et al., 2025).

Future updates

- Outline a clear, formal process for having one’s CEA added to the living review.

- Adding an appendix with details about the different charities.

- Adding more discussion about the different methods across the different evaluators.

- Some presentation enhancements and a visual identity.

- Integrating our table of findings directly in the page.

Contact us

For media enquiries about the living review, please contact hello@happierlivesinstitute.org.